Difference between revisions of "Team:Cambridge-JIC/Autofocus"

m (Bullets points within a bullet point) |

|||

| Line 43: | Line 43: | ||

<li><p>focus stacking - for imaging 3-dimensional objects</p></li> | <li><p>focus stacking - for imaging 3-dimensional objects</p></li> | ||

</ul> | </ul> | ||

| − | <p>Or last, but not least, autofocus just facilitates the work of the person, operating the OpenScope. Manual focusing, although not very challenging, might still be fiddly, especially for the inexperienced user. This is when the autofocus algorithm comes to help.</p> | + | <p>Or last, but not least, autofocus just facilitates the work of the person, operating the OpenScope. Manual focusing, although not very challenging, might still be fiddly, especially for the inexperienced user. This is when the autofocus algorithm comes to help. We tried out various methods of performing this task, and settled on the empirically best methods, although the rest of the code is present in our source code (although unused).</p> |

</div></div></section> | </div></div></section> | ||

| Line 50: | Line 50: | ||

<div style="width: 100%; padding: 0% 10%; margin: 30px 0px;color:#000"> | <div style="width: 100%; padding: 0% 10%; margin: 30px 0px;color:#000"> | ||

<h3>The Solution</h3> | <h3>The Solution</h3> | ||

| − | <p>The strategy of the autofocus algorithm is to calculate the <b>focus score</b> for each frame imaged while gradually changing the sample-objective distance, until the score is maximized. This corresponds to the most sharply focused image. The physics behind the process is very simple: image of an object, viewed through a lens, is produced at a particular distance from the lens; at any shorter/longer distance the light rays from a single point on the object do not converge into a point, and therefore the image is blurred. The focus score can therefore be simply regarded as a mathematical function with a single maximum. | + | <p>The strategy of the autofocus algorithm is to calculate the <b>focus score</b> for each frame imaged while gradually changing the sample-objective distance, until the score is maximized. This corresponds to the most sharply focused image. The physics behind the process is very simple: image of an object, viewed through a lens, is produced at a particular distance from the lens; at any shorter/longer distance the light rays from a single point on the object do not converge into a point, and therefore the image is blurred. The focus score can therefore be simply regarded as a mathematical function with a single maximum.</p> |

| + | |||

| + | <p>There are, however, several issues to consider. Multiple methods exist for calculating the focus score. As well as this, there many ways to scan around for the best focus score. The challenge was to find the most robust solutions. Here is what we tried: | ||

<ul> | <ul> | ||

| − | <li><p>Tested out focus scores based on variance since this was recommended by both sources [1][2] on artificially blurred out images (Gaussian blur with different standard deviations). This worked well. Variance was chosen as the focus measure to implement since it is quick to evaluate, and numpy has a function that does this already.</p></li> | + | <li><p>Tested out focus scores based on variance since this was recommended by both sources [1][2] on artificially blurred out images (Gaussian blur with different standard deviations). This worked well. <b>Variance was chosen as the focus measure to implement since it is quick to evaluate</b> (speed being an important priority), and numpy has a function that does this already.</p></li> |

<li><p>Implemented an autofocus method for multiresolution search (described in the textbook [2], section 16.3.3).</p><!--</li>--> | <li><p>Implemented an autofocus method for multiresolution search (described in the textbook [2], section 16.3.3).</p><!--</li>--> | ||

<ul> | <ul> | ||

| − | <li><p>Implemented DWT (Discrete Wavelet Transform) to decompose image to low resolution versions. This was expected to allow for faster computation and autofocusing. In practice, there was no significant computational advantage from this, so low-res images were not used in the final algorithm.</p></li> | + | <li><p>Implemented DWT (Discrete Wavelet Transform) to decompose image to low resolution versions. This was expected to allow for faster computation and autofocusing. In practice, there was no significant computational advantage from this, so low-res images were not used in the final algorithm.</p></li></ul> |

| − | <li><p> | + | |

| + | <p>Having been able to determine a focus score for an image, we then turned to methods for finding the most in-focus position. This involves several important issues:</p> | ||

| + | <ul> | ||

| + | <li><p><b>Delays from moving the motor to receiving an updated frame</b> - this is a common issue with feedback loops, everywhere from biological systems to adjusting the temperature of the shower. Failure to deal with this can result in <i>oscillations</i> around the set point, with the image remaining just out of focus most of the time. We dealt with this by introducing a delay between sending a "move motor" command and a "read focus score" command to attempt to match the two. Reading a focus score when the motors were no longer moving is not an issue, but reading a value before the desired position has been reached is. Thus, it is beneficial to err on the side of an increased counter-delay, though at the expense of the time for autofocus to take place.</p></li> | ||

| + | <li><p><b>Unreliability of motor positioning</b> - when moving +400 steps and then -400 steps, there is no guarantee of returning to exactly the same position.</p></li> | ||

| + | <li><p><b>Local maxima not being the global maxima</b> - A more theoretical issue than the practical ones above, it once reaching an area of high focus value, there is little guarantee that this is most focused plane in the image. This is in contrast to the issue above - if we "give up" an area of high focus, we may find it difficult to retrieve it. We ignored this issue in favour of the more practical ones above - attempting to account for global maxima of focus scores would require the autofocus procedure to be far longer than desirable. We also implemented a naive threshold to stop moving the z axis once the focus was "good enough", a value for which was empirically derived. Future work may wish to extend this to a dynamically calculated value or to characterise most-in-focus scores for many other types of samples.</p></li> | ||

| + | <li><p><b>We settled for a hill-climbing approach</b>. Imagining the theoretical focus score for all possible z values on a graph, it may look a little like a hill. This algorithm attempts to "climb" that hill of focus scores. This algorithm assesses the focus scores of individual frames, moving in the direction where the focus is increasing. If the focus decreases, the motors are reversed in smaller increments. This process is repeated several times until an exit condition is reached.</p></li> | ||

| + | </ul> | ||

| + | |||

| + | <p>We tried a variety of other approaches to tackle these issues:</p> | ||

| + | <ul> | ||

<li><p>Implemented the Gaussian approximation to predict in focus position from the interval. This assumes that the image is formed from paraxial rays only, which is a good approximation in the case of microscopic imaging.</p></li> | <li><p>Implemented the Gaussian approximation to predict in focus position from the interval. This assumes that the image is formed from paraxial rays only, which is a good approximation in the case of microscopic imaging.</p></li> | ||

<li><p>Implemented the Fibonacci search algorithm. It turns out that this is the optimal search algorithm for the situation of unimodal functions [2].</p></li> | <li><p>Implemented the Fibonacci search algorithm. It turns out that this is the optimal search algorithm for the situation of unimodal functions [2].</p></li> | ||

| Line 63: | Line 75: | ||

<p>Gradient search, which is a simple technique to locate local extrema, was also attempted, but did not give satisfactory results, as the learning rate required for convergence was slow. Also, noise from images drastically disrupted the calculations. This was a significant problem even when the gradient was calculated by taking multiple pictures around the point of interest. </p> | <p>Gradient search, which is a simple technique to locate local extrema, was also attempted, but did not give satisfactory results, as the learning rate required for convergence was slow. Also, noise from images drastically disrupted the calculations. This was a significant problem even when the gradient was calculated by taking multiple pictures around the point of interest. </p> | ||

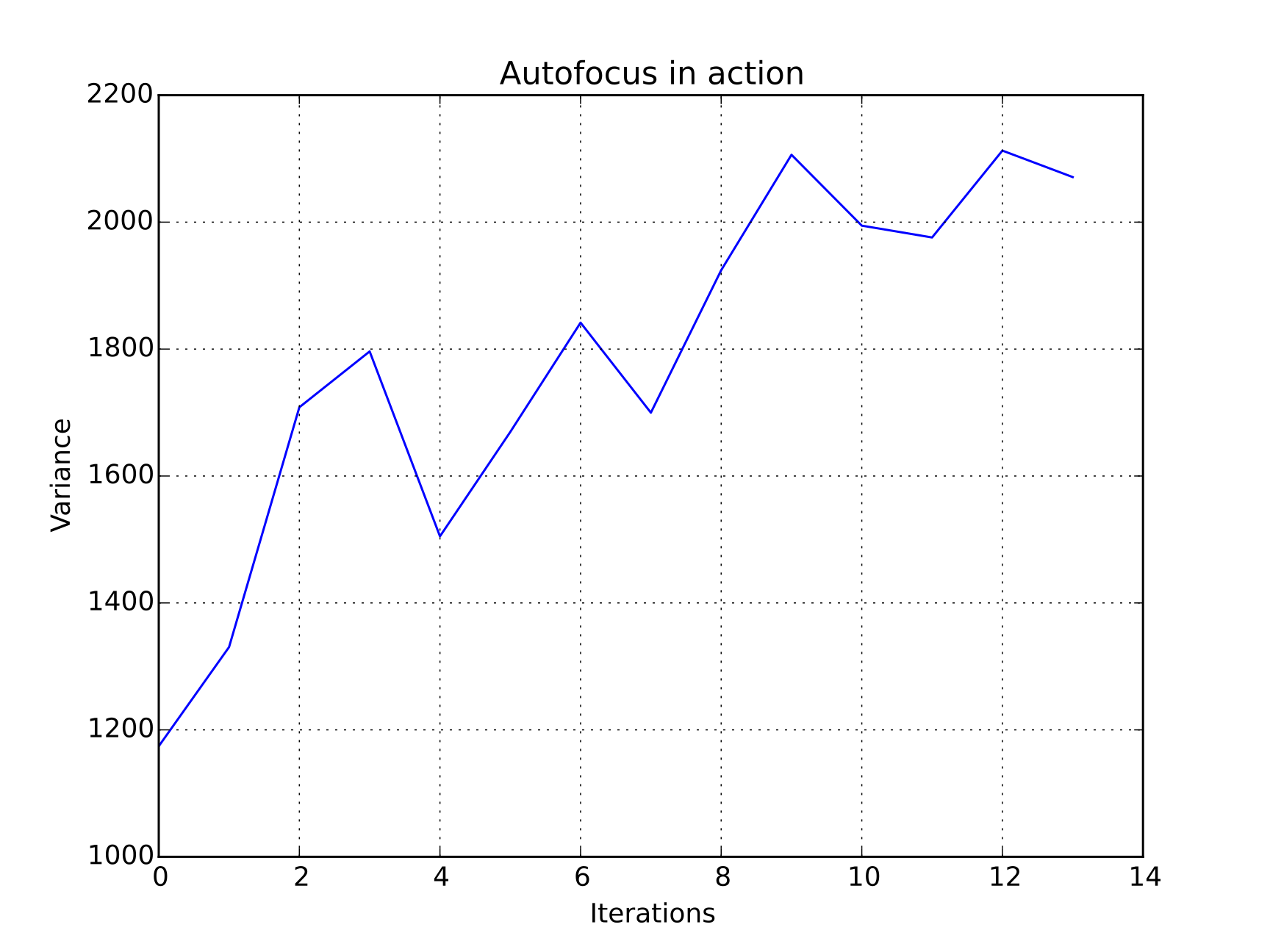

<center><img src="//2015.igem.org/wiki/images/d/da/CamJIC-Software-Autofocus-Example.jpg" style="width:40%;margin:10px"><img src="//2015.igem.org/wiki/images/b/b1/CamJIC-Software-Autofocus-Graph.png" style="width:40%;margin:10px"><p><i>Autofocus algorithm in action: the plot shows the increase of the variance (i.e. the focus score) of the image with each iteration.</i></p></center> | <center><img src="//2015.igem.org/wiki/images/d/da/CamJIC-Software-Autofocus-Example.jpg" style="width:40%;margin:10px"><img src="//2015.igem.org/wiki/images/b/b1/CamJIC-Software-Autofocus-Graph.png" style="width:40%;margin:10px"><p><i>Autofocus algorithm in action: the plot shows the increase of the variance (i.e. the focus score) of the image with each iteration.</i></p></center> | ||

| − | <p>The performance of the final autofocus algorithm varies, depending on the starting point of the search. A typical processing time is around | + | <p>The performance of the final autofocus algorithm varies, depending on the starting point of the search. A typical processing time is around 5s, which allows for autofocus during live-stream imaging through the <a href="" class="blue">Webshell</a>.</p> |

| − | <p>The autofocus algorithm we have implemented is open for further improvements and performance enhancement. Ideas for future development include a threshold value to indicate the event of reaching max focus, and hence to stop the search, automatic measurement of the actual height to the CCD, better motors to improve precision etc.</p> | + | <p>The autofocus algorithm we have implemented is open for further improvements and performance enhancement. Ideas for future development include a dynamic threshold value to indicate the event of reaching max focus, and hence to stop the search, automatic measurement of the actual height to the CCD, better motors to improve precision etc.</p> |

<hr> | <hr> | ||

| − | <center><p><i>The Autofocus algorithm we have developed can be found in the <a href="" class="blue">full software package</a>. It was developed by Rajiv, with useful feedback and advice from the rest of the Software team.</i></p></center> | + | <center><p><i>The Autofocus algorithm we have developed can be found in the <a href="" class="blue">full software package</a>. It was developed by Rajiv, with useful feedback and advice from the rest of the Software team (Will, Ocean and Souradip).</i></p></center> |

<hr> | <hr> | ||

<p style="font-size:80%">References:<br>[1] Firestone, L., Cook, K., Culp, K., Talsania, N. and Preston, K. (1991). Comparison of autofocus methods for automated microscopy. <i>Cytometry</i>, 12(3), pp.195-206<br>[2] Wu, Q., Merchant, F. and Castleman, K. (2008). <i>Microscope image processing</i>. Amsterdam: Elsevier/Academic Press.</p> | <p style="font-size:80%">References:<br>[1] Firestone, L., Cook, K., Culp, K., Talsania, N. and Preston, K. (1991). Comparison of autofocus methods for automated microscopy. <i>Cytometry</i>, 12(3), pp.195-206<br>[2] Wu, Q., Merchant, F. and Castleman, K. (2008). <i>Microscope image processing</i>. Amsterdam: Elsevier/Academic Press.</p> | ||

Revision as of 02:37, 18 September 2015